|

Similarly, you can save 93% on an image like. This will help you avoid DOMParsing also. You can get even more savings because you are programatically extracting just one part so they don't even need to be svg files since you are treating them as plaintext. I was able to save about 70% on many of the svgs just by running them through svgomg with default settings. You are serving very unoptimized images and svgs. Squoosh for raster images (png, jpeg.Ĭan anyone give me feedback on my portfolio? There's tons of great online tools to compare image compressions visually and pick the best one. Your logo.webp is 70% larger than an optimized webp image (and even 28% larger than a png) so you are getting zero benefit for using webp and are actually hurting users. Optimize your images! Your logo.svg is 20% larger than it needs to be. Any feedback/suggestions would be greatly appreciated. How I reduced clients website loading speed from 10 to 2 sec? Time: 2 hours, Charged: $500.HERE’s a list of free platforms you need for your next project! (Part-2) Squoosh- Optimise your images with drag and drop.

All the server does in those cases is allow these things to run on severely outdated hardware.

You can run physics simulations and ML algorithms client side, and quite well using WASM or WebGPU.

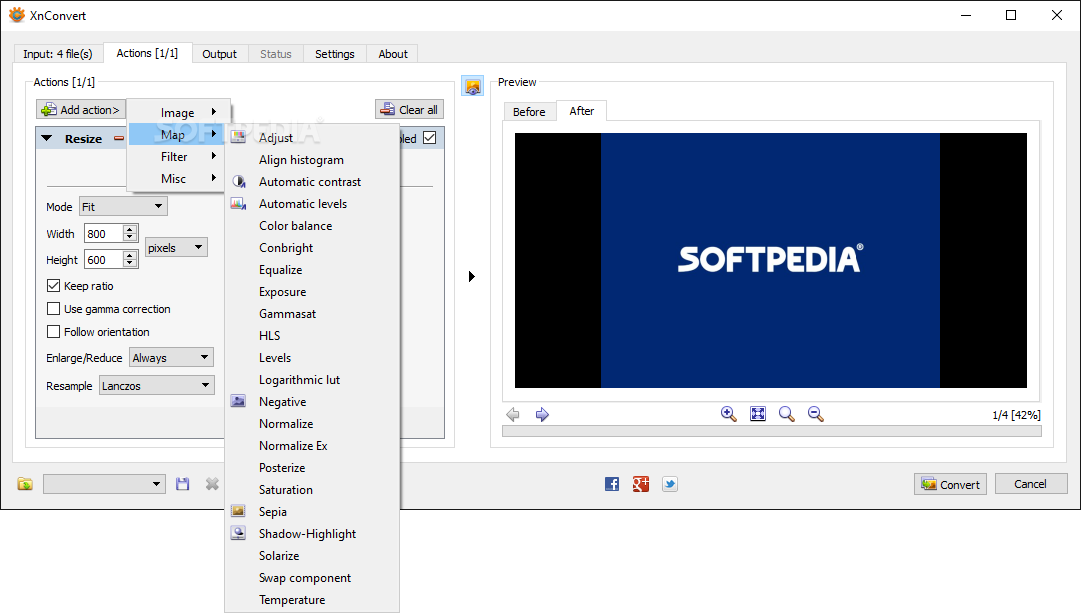

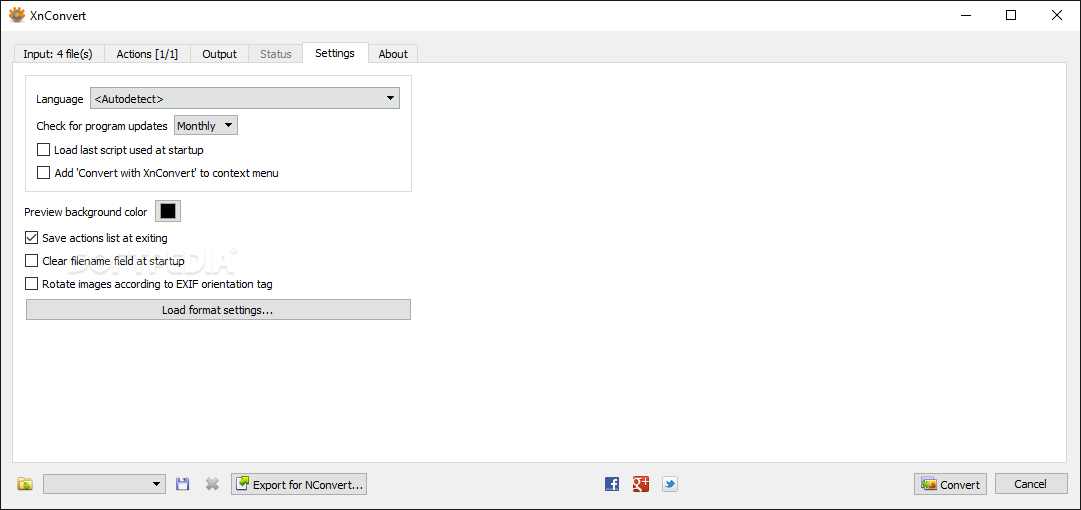

can work entirely client side and does complex math. If needed, use sharp (and slow) RGB->YUV conversion.I understand that React is a front end javascript framework but can it be used alone to create web apps?Īnd this part of the comment is untrue, which they were pointing out. The compressor makes several passes of partial encoding in order to get as close as possible to this target.Įnable multi-threaded encoding: 0 = disabled, 1 = enabled. The default value is 80.Ī target size (in bytes) to try and reach for the compressed output. The possible range goes from 0 (algorithm is off) to 100 (the maximal effect). Spatial noise shaping (SNS) refers to a general collection of built-in algorithms used to decide which area of the picture should use relatively less bits, and where else to better transfer these bits. The amplitude of the spatial noise shaping. Maximum number of passes to target compression size or PSNR.Ĭhoose from 1 to 4, the maximum number of segments to use. The near lossless encoding, between 0 (max-loss) and 100 (off).Ĭhoose from: 0=none, 1=segment-smooth, 2=pseudo-random dithering.Ĭhoose 0 for no quality degradation and 100 for maximum degradation. Lower value might result in faster processing time at the expense of larger file size and lower compression quality. When higher values are utilized, the encoder spends more time inspecting additional encoding possibilities and decide on the quality gain. Both 32-bit and 64-bit editions are available. It controls the trade off between encoding speed and the compressed file size and quality. XnConvert has been created by XnView developers. Image-hint= default, photo, picture, graph Typical values are usually in the range of 20 to 50. The higher the value, the smoother the image appears. Higher values increase the strength of the filtering process applied after decoding the image. The strength of the deblocking filter, between 0 (no filtering) and 100 (maximum filtering). Return a similar compression to that of JPEG but with less degradation. When enabled, the algorithm spends additional time optimizing the filtering strength to reach a well-balanced quality. It's disabled by default to help compressibility. Lossless compression of alpha is achieved using a value of 100, while the lower values result in a lossy compression. The compression value for alpha compression between 0 and 100. Predictive filtering method for alpha plane: 0=none, 1=fast, 2=best. Encode the alpha plane: 0 = none, 1 = compressed.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed